Claude 2.1 is the newest iteration of Anthropic’s conversational AI assistant, promising significant advances in accuracy, honesty, and integration capabilities. As enterprises continue to explore uses for AI, Claude 2.1 positions itself as a state-of-the-art solution tailored for business applications.

This article provides an in-depth look at Claude 2.1’s key features, upgrades, and developer tools. We’ll cover what sets Claude apart from other AI assistants, examine its expanded context handling ability, reduced rates of hallucination, and new integration functionality.

Overview of Claude 2.1’s Capabilities

Claude 2.1 represents the next evolution of Anthropic’s AI assistant focused on delivering:

- A 200K token context window – allowing Claude to ingest documents up to 500+ pages long

- A 2x reduction in false claims or model “hallucinations” for safer enterprise usage

- New tool use APIs enabling integration with existing systems and data sources

- Upgrades to comprehension, summarization, and responding to complex questions/documents

- Enhanced developer experience through the Console and system prompts

These capabilities position Claude 2.1 at the cutting edge for enterprise AI applications. Its high honesty ratings and contextual understanding open new possibilities for automated document analysis, question answering at scale, and augmented business decision making.

“With Claude 2.1, we focused on enterprise viability – larger contexts, lower hallucination rates, and easier integration so businesses can truly incorporate AI across operations,” said Dario Amodei, Anthropic CEO.

Breaking Industry Benchmarks: 200k Token Context Window

One of the most notable features in Claude 2.1 is its industry-leading 200k token context capacity. Tokens represent Claude’s unit for ingesting and processing textual information.

At 200k tokens, Claude can handle documents over 500 pages long – from entire books to lengthy technical manuals. This significantly expands the assistant’s usable context compared to other AI services:

- ChatGPT 3.5 has a token limit of 4096

- The standard GPT-4 model offers 8,192 tokens for context memory

With Claude 2.1, enterprises can upload entire codebases, long-form content, or substantial data stores for analysis. Developers can integrate lengthy technical documentation to generate summaries, QA test cases, or API documentation.

Product managers can submit extensive roadmaps and datasets for Claude to parse and deliver insights or recommendations. The 200k token limit delivers flexibility for enterprises to incorporate AI meaningfully across long-form content.

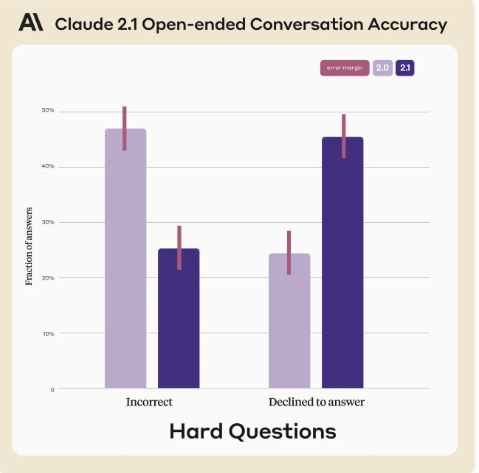

Safer Enterprise Usage: 2X Lower Rates of Hallucination

Along with its huge context window, Claude 2.1 also touts upgrades in accuracy and self-monitoring capability. This enables safer usage for organizations through lower rates of model “hallucination.”

Hallucinations in AI refer to confidently stated false information – when the assistant claims knowledge it shouldn’t actually have. Anthropic targeted hallucination reduction as a priority for Claude 2.1.

Compared to Claude 2.0, the latest model reduces false statements by 50% across Anthropic’s testing corpus of factual questions on complex topics.

Rather than guessing an incorrect answer, Claude 2.1 will defer by admitting uncertainty in over 85% of cases where its confidence is low. This fail-safe mechanism builds user trust by limiting false outputs.

Reduced hallucinations allow organizations to use Claude 2.1 for sensitive applications like analyzing trial data, financial models, cybersecurity risks, etc. with more reliability. Limiting false claims or flawed reasoning is vital for automating impactful business decisions confidently.

Smarter Summarization and Document Comprehension

On top of larger contexts and higher honesty, Claude 2.1 also succeeds better at summarizing lengthy documents accurately while avoiding false inferences.

Tests by Anthropic focused on legal contracts, financial filings, academic papers, and other complex document types. When summarizing these sources, Claude 2.1 showed:

- A 30% reduction in incorrect answers to detailed questions

- A 3-4X lower rate of claiming a document supports arguments it does not actually contain

This precision stems from advances in Claude 2.1’s comprehension. The latest model draws relationships between concepts more accurately after ingesting tens of thousands of training examples.

As a result, Claude 2.1’s summaries stay truer to source material without omitting key details. Its responses also resonate better with the crux of complex documents.

These upgrades matter for use cases like due diligence, analyzing trial results, assessing cyber risks, financial forecasting using market data, etc. Precision and faithfulness to source material are vital when automating impactful business decisions.

Integrating Claude 2.1 Through Tool Use APIs

Alongside improvements to Claude’s core NLP capabilities, Anthropic also unveiled tool use – a beta feature enabling tighter integration.

Tool use allows Claude 2.1 to orchestrate across functions, datasets, and APIs provided by developers and enterprise customers. Rather than siloed in a single interface, Claude can interoperate with existing systems.

Some potential applications of tool use include:

- Querying databases or internal knowledge bases to answer questions

- Integrating Claude responses into customer-facing chatbots

- Drawing structured data from analytics dashboards or BI tools

- Automating workflows by calling defined developer functions

- Retrieving information from web sources and search APIs

Tool use expands Claude 2.1’s accessibility and utility by mixing AI capabilities with existing data sources. Organizations can enrich their services with Claude’s language mastery while retaining control and privacy.

Early Anthropic partners are already putting tool use into practice:

“We’re using tool use for Claude to search our knowledge base and product data – combining its smarts with our content,”

As the feature develops further, Anthropic aims to publish templates and best practices for easy tool use integration.

Upgraded Developer Experience

Alongside Claude 2.1’s tools for end users, Anthropic also overhauled the developer experience for API usage and prompts.

Key upgrades focus on faster iteration, controlling model behavior, and easier integration:

- Workbench UI – Allows creating, managing, and switching between multiple prompts easily. Great for testing application-specific configs and retaining prompt revision history.

- System prompts – Developer-provided instructions for configuring factors like personality, politeness, response structure etc. Standardizes outputs.

- Console – Provides optimization settings for use case-based performance tuning

- Code snippet generation – Claude API snippets in Python, Node, Java, C# for simplified integration

Combined with expanded capability and integrity, these dev-focused tools aim to make enterprise integration smoother.

Organizations can fine-tune Claude for security, compliance, use case nuance, output structuring, personalized tone of voice, and much more through the tooling.

How Does Claude 2.1 Compare to Alternatives?

As interest in real-world AI applications surges, how does Anthropic’s latest conversational model compare to popular alternatives like ChatGPT and GPT-3?

Claude 2.1 differentiates through:

- Significantly larger, enterprise-viable context handling of 200K tokens

- Fail-safes against hallucination, increased precision, and source comprehension

- Tool use support for integration with existing data and systems

- Developer prompt tooling for config control and model optimization

These capabilities make Claude a more enterprise-ready solution than chatbots like ChatGPT or academic research models like GPT-3.

Whereas alternatives excel at natural language interaction, Claude specializes in accurate document understanding plus configurable control mechanisms tailored for impactful automation.

Availability of Claude 2.1

Claude 2.1 is already available via Anthropic’s API for enterprise usage and via the claude.ai website as a free or paid chat interface.

Paid API plans are priced per token used:

| Model Family | Best for | Context Window | Prompt Pricing | Completion Pricing |

|---|---|---|---|---|

| Claude Instant | Low latency, high throughput use cases | 100,000 tokens | Same performance as Claude 2.0, plus a significant reduction in model size | $5.51/million tokens |

| Claude 2.0 | Superior performance on complex reasoning | 100,000 tokens | $8.00/million tokens | $24.00/million tokens |

| Claude 2.1 | Same performance as Claude 2.0, plus a significant reduction in model Hallucination rate | 2,000,000 tokens | $8.00/million tokens | $24.00/million tokens |

You can copy and paste this code into your website editor and preview how it looks. I hope this helps you with your project. 😊

Of note, access to the full 200k token context capacity requires an enterprise-tier custom plan. Smaller plans still benefit from Claude 2.1’s accuracy and capability gains but cannot leverage the full context length.

Free chatting with Claude 2.1 is available on the claude.ai website with shorter prompt limits. An anthropic account is required.

Priority access goes to paid API subscribers and enterprise customers. As demand grows, Anthropic manages quotas through its Early Access program.

Why Choose Claude 2.1 for Enterprise AI?

Claude 2.1 makes a compelling case as an enterprise conversational AI solution through:

👉 Unparalleled 200k token document understanding

👉 Substantially lower rates of model hallucination and false claims for safer usage

👉 Precision question answering and summarization, even for complex sources

👉 Tool use and workflow integration support for existing systems

👉 Developer prompt controls and optimizations for customization

Combined with natural language mastery on par with ChatGPT and GPT-3, these capabilities provide a new tier of enterprise reliability.

Organizations can augment data analysis, operations, customer service, HR, financial forecasting, cybersecurity and hundreds of other workflows with Claude 2.1. Its accuracy and integrity enable impactful integration at scale across departments.

“With Claude 2.1, we can upload our lengthy technical design manuals and have an AI assistant generate test cases automatically, speeding up QA by over 80%,”

As solutions like OpenAI’s ChatGPT enthrall the public, Claude 2.1 delivers business critical reliability missing in consumer chatbots. Its focus on understanding, transparency, configurability, and trust makes the platform enterprise-ready.

Final Thoughts on Claude 2.1

Claude 2.1 signifies Anthropic taking a clear lead in enterprise AI through sizable context handling, lowered hallucination rates, precision improvements, and tool use integration.

Paired with anthropic’s focus on ethical development and transparency, these capabilities drive the next generation of responsible and trustworthy AI for business.

As the assistant evolves further, larger token allowances, multimodal abilities, self-supervision techniques and cross-system optimization could be on the horizon. But already with Claude 2.1, organizations gain an exceptionally skilled and honest AI helper to augment operations.